Where Can You discover Free Deepseek Sources

페이지 정보

본문

It not solely fills a coverage gap but units up a data flywheel that might introduce complementary results with adjacent instruments, equivalent to export controls and inbound funding screening. When data comes into the model, the router directs it to probably the most applicable experts primarily based on their specialization. The mannequin is available in 3, 7 and 15B sizes. The aim is to see if the mannequin can resolve the programming task without being explicitly proven the documentation for the API update. The benchmark involves synthetic API operate updates paired with programming tasks that require utilizing the up to date functionality, challenging the model to reason in regards to the semantic adjustments quite than simply reproducing syntax. Although much less complicated by connecting the WhatsApp Chat API with OPENAI. 3. Is the WhatsApp API really paid to be used? But after wanting by means of the WhatsApp documentation and Indian Tech Videos (yes, all of us did look on the Indian IT Tutorials), it wasn't really a lot of a different from Slack. The benchmark involves artificial API operate updates paired with program synthesis examples that use the updated performance, with the objective of testing whether an LLM can solve these examples with out being supplied the documentation for the updates.

It not solely fills a coverage gap but units up a data flywheel that might introduce complementary results with adjacent instruments, equivalent to export controls and inbound funding screening. When data comes into the model, the router directs it to probably the most applicable experts primarily based on their specialization. The mannequin is available in 3, 7 and 15B sizes. The aim is to see if the mannequin can resolve the programming task without being explicitly proven the documentation for the API update. The benchmark involves synthetic API operate updates paired with programming tasks that require utilizing the up to date functionality, challenging the model to reason in regards to the semantic adjustments quite than simply reproducing syntax. Although much less complicated by connecting the WhatsApp Chat API with OPENAI. 3. Is the WhatsApp API really paid to be used? But after wanting by means of the WhatsApp documentation and Indian Tech Videos (yes, all of us did look on the Indian IT Tutorials), it wasn't really a lot of a different from Slack. The benchmark involves artificial API operate updates paired with program synthesis examples that use the updated performance, with the objective of testing whether an LLM can solve these examples with out being supplied the documentation for the updates.

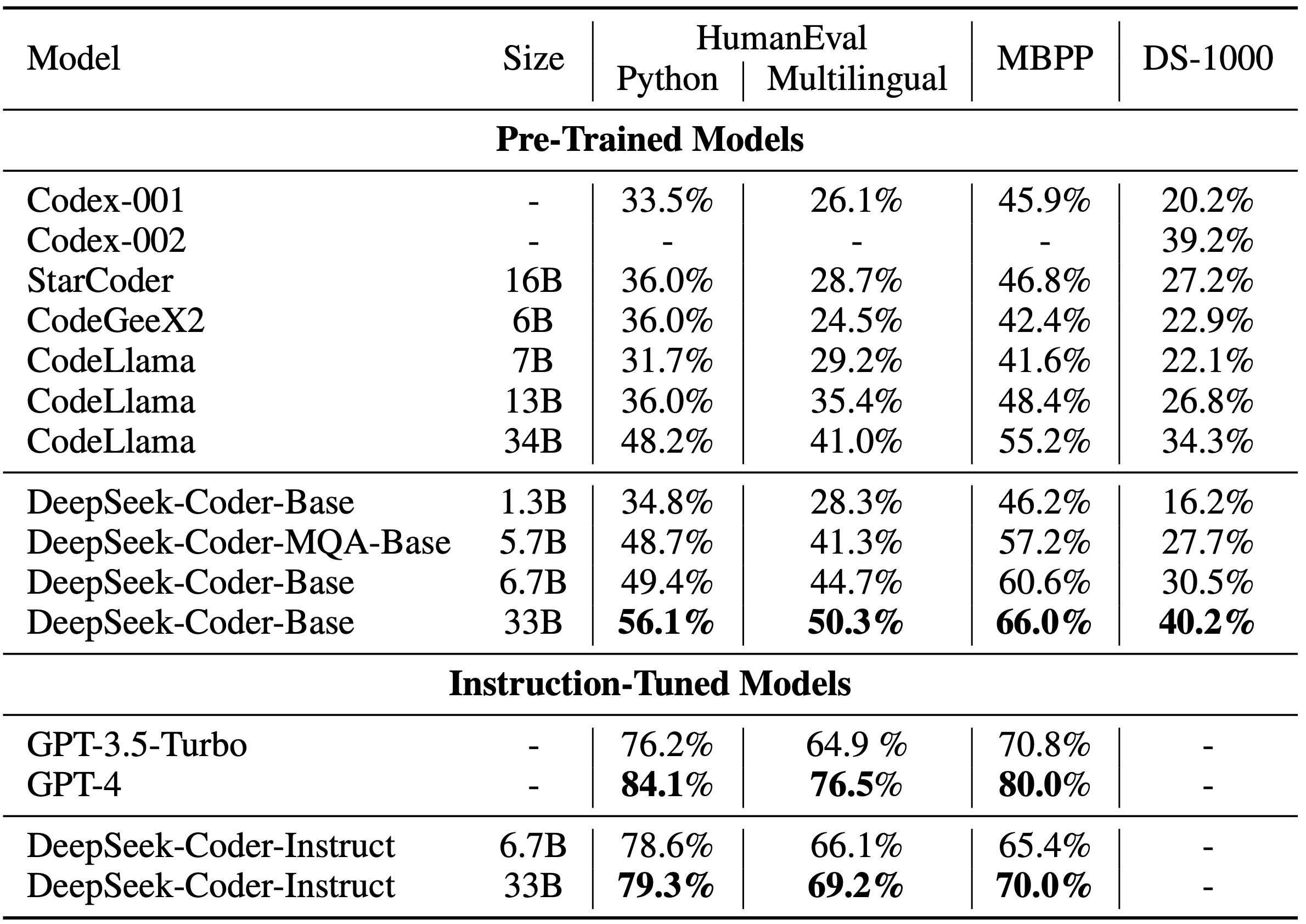

The objective is to update an LLM in order that it could actually clear up these programming tasks without being supplied the documentation for the API changes at inference time. Its state-of-the-artwork performance across varied benchmarks indicates strong capabilities in the commonest programming languages. This addition not only improves Chinese a number of-choice benchmarks but in addition enhances English benchmarks. Their preliminary try to beat the benchmarks led them to create models that had been quite mundane, much like many others. Overall, the CodeUpdateArena benchmark represents an essential contribution to the continued efforts to enhance the code generation capabilities of massive language fashions and make them more robust to the evolving nature of software development. The paper presents the CodeUpdateArena benchmark to test how properly massive language models (LLMs) can replace their information about code APIs which might be constantly evolving. The CodeUpdateArena benchmark is designed to check how effectively LLMs can replace their own information to sustain with these actual-world changes.

The CodeUpdateArena benchmark represents an essential step ahead in assessing the capabilities of LLMs within the code generation area, and the insights from this research might help drive the event of more robust and adaptable fashions that can keep pace with the rapidly evolving software landscape. The CodeUpdateArena benchmark represents an vital step ahead in evaluating the capabilities of giant language models (LLMs) to handle evolving code APIs, a crucial limitation of current approaches. Despite these potential areas for further exploration, the general method and the results offered in the paper symbolize a big step forward in the sector of giant language models for mathematical reasoning. The research represents an vital step forward in the ongoing efforts to develop large language fashions that may effectively tackle complex mathematical problems and reasoning duties. This paper examines how massive language fashions (LLMs) can be utilized to generate and free deepseek (s.id) reason about code, but notes that the static nature of these fashions' knowledge doesn't reflect the truth that code libraries and APIs are continually evolving. However, the data these models have is static - it does not change even because the actual code libraries and APIs they rely on are consistently being up to date with new options and modifications.

If you have any issues regarding wherever and how to use free Deepseek, you can get hold of us at the page.

- 이전글How Mesothelioma From Asbestos Can Be Your Next Big Obsession 25.02.01

- 다음글14 Businesses Doing An Amazing Job At Outdoor Wood Burning Stove 25.02.01

댓글목록

등록된 댓글이 없습니다.