GitHub - Deepseek-ai/DeepSeek-Coder: DeepSeek Coder: let the Code Writ…

페이지 정보

본문

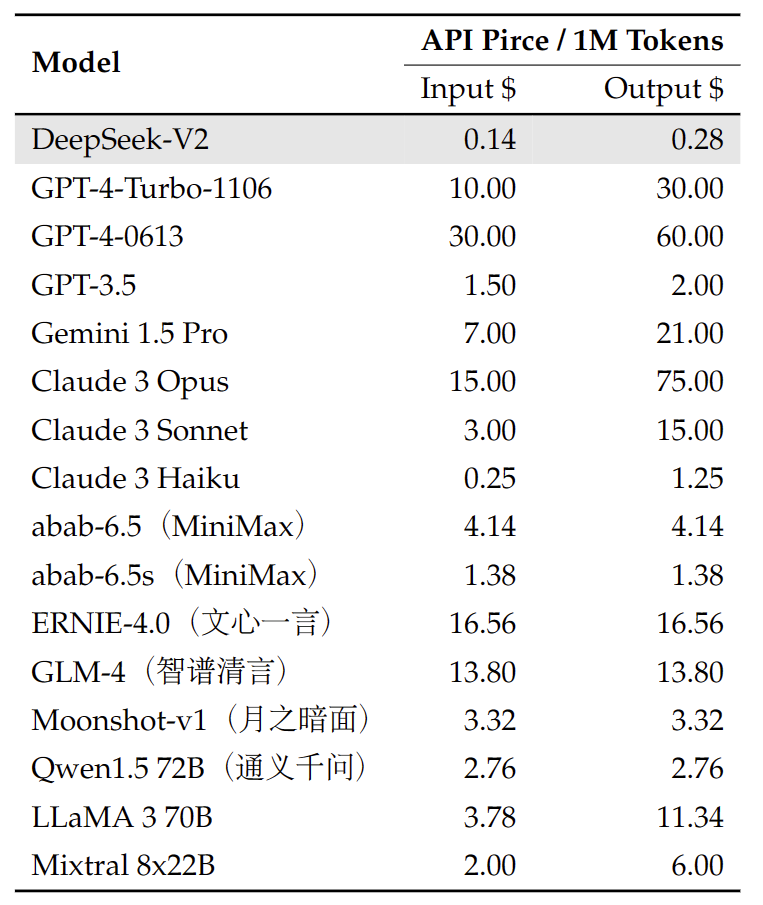

Compared with DeepSeek 67B, DeepSeek-V2 achieves stronger efficiency, and meanwhile saves 42.5% of coaching costs, reduces the KV cache by 93.3%, and boosts the maximum technology throughput to 5.76 instances. Mixture of Experts (MoE) Architecture: DeepSeek-V2 adopts a mixture of consultants mechanism, permitting the mannequin to activate solely a subset of parameters throughout inference. As experts warn of potential risks, this milestone sparks debates on ethics, security, and regulation in AI growth.

Compared with DeepSeek 67B, DeepSeek-V2 achieves stronger efficiency, and meanwhile saves 42.5% of coaching costs, reduces the KV cache by 93.3%, and boosts the maximum technology throughput to 5.76 instances. Mixture of Experts (MoE) Architecture: DeepSeek-V2 adopts a mixture of consultants mechanism, permitting the mannequin to activate solely a subset of parameters throughout inference. As experts warn of potential risks, this milestone sparks debates on ethics, security, and regulation in AI growth.

- 이전글5 Killer Quora Answers On Accident Attorney Lawyer 25.02.01

- 다음글Ten Vauxhall Keys Cut-Related Stumbling Blocks You Shouldn't Share On Twitter 25.02.01

댓글목록

등록된 댓글이 없습니다.